As part of my final year studies, myself and two other students, one from fashion and one from product design, were given a brief from Fjord Design to envision the future of Zero UI. Over the year we worked to, explore the Zero UI space, see how we could challenge it and develop an experimental proof of concept to learn from.

The literature talked about Zero UI as any interface that lacked a predominantly graphical UI, was seamless or integrated into the environment and that used some form of AI to respond to interactions. We quickly discovered that a great deal of the discussion surrounding Zero UI was about bringing conversational interfaces to existing services and that other types of interfaces weren't being explored as actively.

The initial brief was very technology centred, and we quickly reframed it from focusing on making better services to augmenting human interaction and experiences. We wanted to challenge the predominant focus of Zero UI technologies that are being developed at the moment from, creating conversational bridges with existing services to, aiding the way people interact with their environments and the people around them. In doing this, we made a conscious decision to explore Zero UI through non-conversational interfaces and to focus on how we could apply the technology to a social context. We pitched this rebrief back to Fjord, and they were interested in exploring this angle as well. Due to the nature of their business, Fjord was much more interested in learning something from our work rather than being handed a finished product, so we ran experiments to learn from.

Our biggest experiment was creating a device aimed at providing those with visual impairments access to non-verbal social cues. We came up with this concept because visually impaired people are well suited to using non-graphical interfaces and they don't have access to as much non-verbal communication as those without such impairments. While we think that this technology could have broader impacts, especially within customer service environments, they were the niche that needed it the most, if you will.

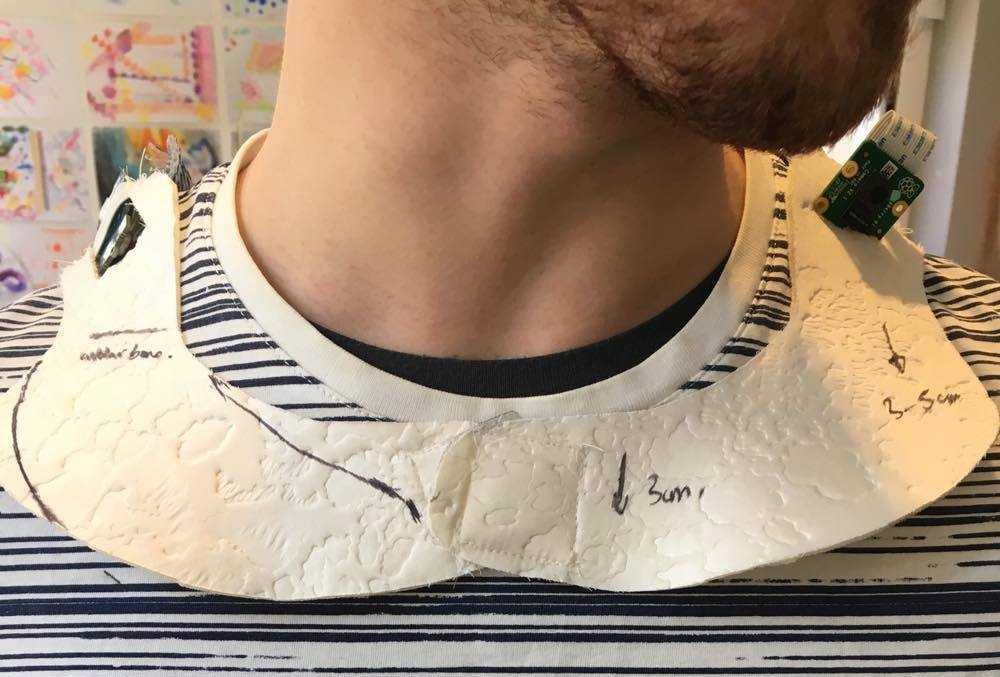

We researched visual impairments and met with people at Guide Dogs NSW / ACT and Blind Sports NSW to better understand daily life for visually impaired people incorporate this learning into our proof of concept. The device we designed uses a Raspberry Pi running a custom python script, a camera and a haptic motor in conjunction with the Microsoft Emotion Recognition API to provide real-time haptic feedback about the emotions of those in front of the wearer. The API uses facial expressions it detects in pictures from the camera to determine emotions and then this is translated into a haptic pattern by the Pi.

As our final deliverable, we presented recommendations back to the directors of Fjord Design in Sydney and Melbourne (via telepresence) in their weekly meeting.